A Road Map of Nonparametric Efficiency in Causal Inference

Causal inference promises to answer “what if” questions from observational data. But the standard approach — specify a parametric model, fit it, report the estimate — breaks down under misspecification. And misspecification is the norm, not the exception. This post draws from my notes on (Kennedy 2023). I walk through the core ideas of nonparametric efficiency theory — the framework that lets us pair flexible machine learning with rigorous inference. The central character is the Efficient Influence Function, a single mathematical object that tells us how to build estimators, why they work, and when we can trust their confidence intervals.

1. Motivation

First let’s keep the casual and statistical issues separate.

Causal to Statistical: The target causal parameter (e.g., ATE) can often be identified as a statistical functional of the observed data distribution.

$$\psi: \mathcal{P} \mapsto \mathbb{R}$$

Once identified, the causal problem becomes a pure functional estimation problem.

The Problem with Parametrics: Parametric models are likely misspecified, leading to biased estimates of the functional.

The Problem with Naive Nonparametrics: While we should use flexible machine learning (nonparametric) methods to avoid misspecification, simple “plug-in” estimators (fitting models and plugging predictions into the formula) fail because:

-

They are generally not $\sqrt{n}$-consistent (converge too slowly).

-

They do not yield valid Confidence Intervals.

The Solution: We need Efficiency Theory to understand the theoretical limit of performance and Influence Functions to construct estimators that reach that limit.

2. Efficiency Theory (Lower Bounds)

Now let’s build the “best” estimator.

The Benchmark: We want to find the best possible performance (lowest Mean Squared Error) any estimator can achieve.

Parametric Intuition: In parametric models, the Cramer-Rao (CR) lower bound sets this benchmark: no unbiased estimator can have a variance lower than the inverse Fisher information.

But now we are in the “nonparametric world”, how to exploit CR lower bound?

The “Submodel” Trick: To apply CR bounds to infinite-dimensional nonparametric models:

-

We define a Parametric Submodel: a smooth, one-dimensional path through the complex nonparametric space that passes through the true distribution.

-

Logic: If we cannot estimate the parameter well in this simple “parametric” slice, we certainly cannot do it in the full nonparametric model.

-

Result: The hardest submodel defines the Local Minimax Lower Bound.

3. The Unified Approach: Central Role of the EIF

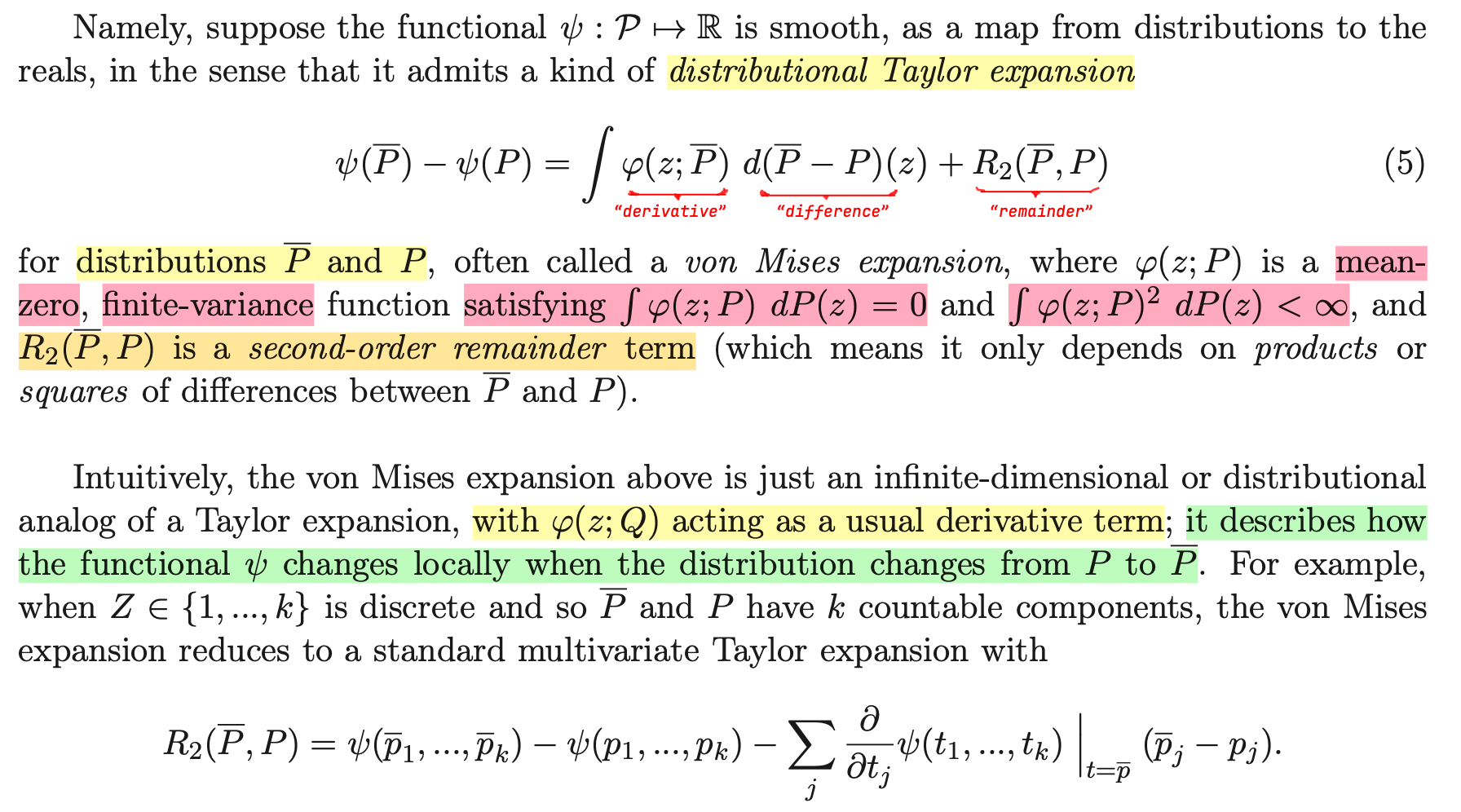

The Efficient Influence Function (EIF) is the “master key.” It is the derivative term in a Distributional Taylor Expansion1 (von Mises expansion):

This single expansion solves all three statistical tasks:

A. How to construct the estimator? (The Recipe)

The expansion reveals that the bias of a naive plug-in estimator is roughly the expected value of the EIF.

Recipe: We construct a One-Step Estimator by taking the naive plug-in estimator and adding the empirical average of the estimated EIF to “de-bias” it.

$$ \hat{\psi}_{\text{one-step}} = \text{Plug-in} + \frac{1}{n}\sum \text{EIF}(\text{Data}) $$

Click to expand: Deconstructing the One-Step Estimator

The Intuition: A “Newton-Raphson” Correction

Think of it like Newton’s method in calculus: if you want to find the root of a function, you make an initial guess, calculate the derivative (slope) at that point, and use it to take a “step” closer to the true answer.

In this context:

-

The Plug-in is your initial “guess” at the parameter.

-

The EIF acts as the “derivative” (gradient) that tells you which direction to move to fix the error.

-

The Formula represents taking that single “step” to correct the bias of your initial guess.

Deconstructing the Formula

$$ \hat{\psi}_{\text{one-step}} = \underbrace{\psi(\hat{P})}_{\text{Plug-in}} + \underbrace{\frac{1}{n}\sum_{i=1}^n \phi(Z_i; \hat{P})}_{\text{Bias Correction}} $$Part A: The Naive Plug-in $\psi(\hat{P})$

This is the estimate you get if you train your machine learning models (like regression or propensity scores), estimating the whole distribution $\hat{P}$, and simply “plug them in” to the formula for your parameter.

Why it fails: Flexible machine learning models trade bias for variance (regularization). They “smooth” the data to avoid overfitting. While this is good for predicting individual outcomes, it creates a first-order bias in the target parameter that does not vanish fast enough (slower than $1/\sqrt{n}$). If you stop here, your confidence intervals will be wrong.

Part B: The Bias Correction $\frac{1}{n}\sum \text{EIF}$

This term estimates the bias of the plug-in and removes it. The logic relies on the Distributional Taylor Expansion, which tells us that the error of the plug-in estimator is approximately equal to the negative expectation of the Influence Function:

$$ \psi(\hat{P}) - \psi(P_{\text{true}}) \approx -\mathbb{E}[\text{EIF}] $$

Since the error is roughly $-\mathbb{E}[\text{EIF}]$, we can “cancel out” this error by adding the empirical average of the EIF estimated from our data. Note: if our initial estimate $\hat{P}$ were perfect (the truth), the average of the EIF would be exactly zero. The fact that this term is not zero reflects the bias in our initial model that needs to be corrected.

Concrete Example: Average Treatment Effect (ATE)

To make this concrete, consider the ATE. This specific “One-Step” estimator is mathematically identical to the famous AIPW (Augmented Inverse Probability Weighting) or Doubly Robust estimator.

-

The Plug-in: You train a regression model to predict outcomes for treated vs. untreated groups. You calculate the average difference. The problem? If your regression is slightly wrong (which it always is), your effect estimate is biased.

-

The EIF Correction: You look at the residuals — the difference between what your model predicted and what actually happened, weighted by the propensity score (the probability of treatment). If your model consistently under-predicts outcomes for the treated group, the EIF term will be positive. Adding this average EIF to the plug-in “bumps” the estimate up, correcting the bias.

Summary

The “One-Step Estimator” recipe acknowledges that modern Machine Learning is great at learning patterns (the Plug-in) but bad at getting the total volume/area right (Bias). By adding the average of the Efficient Influence Function (the derivative), you explicitly calculate that missing “volume” and add it back in, ensuring the final estimate is unbiased and efficient.

B. How to analyze the estimator? (The Proof)

The expansion includes a Remainder Term ($R_2$). To prove the estimator is $\sqrt{n}$-consistent, we must show this remainder is negligible (second-order).

Sample Splitting: We need to use cross-fitting to handle the “empirical process term” (preventing overfitting when estimating nuisance parameters).

C. How to correct the bias? (Double Robustness)

The math of the EIF reveals that the error depends on the product of errors of the nuisance parameters (e.g., error in outcome regression $\times$ error in propensity score).

This yields Double Robustness: even if individual machine learning models converge slowly (e.g., $n^{-1/4}$), their product converges fast enough ($n^{-1/2}$) to allow for valid inference.

4. The Link Between EIF and Riesz Representer

The connection between the Efficient Influence Function (EIF) and the Riesz Representer (RR) is the bridge between theoretical statistics and practical machine learning.

The Deconstruction of the EIF

We already know the EIF is the “correction term” we add to a naive plug-in estimator to fix bias. The Riesz Representer is the key component inside that EIF.

For a vast class of problems (linear functionals), the EIF always takes this specific structure:

$$ \underbrace{\phi(Z)}_{\text{EIF}} = \underbrace{\psi(P)}_{\text{Plug-in}} + \underbrace{\alpha(X, W)}_{\text{Riesz Representer}} \times \underbrace{(Y - \mu(X, W))}_{\text{Outcome Residual}} $$-

$\mu(X, W)$: The conditional expectation (the outcome model, e.g., regression of $Y$ on $X, W$).

-

$\alpha(X, W)$: The Riesz Representer. It tells us how much weight to give each residual to correct the bias.

-

The EIF says: “To fix bias, look at your regression errors ($Y - \mu$).”

-

The RR says: “Here is exactly how to weight those errors for this specific causal problem.”

Takeway

Here is the takeaway. Nonparametric efficiency theory is not an abstraction separate from practice — it is the practice. The EIF tells us what to estimate, the Riesz Representer tells us how to weight the errors, and double robustness explains why the whole thing holds together even when our models are imperfect. If we use AIPW, DML, or any doubly robust estimator, we are already using these ideas. The theory simply makes explicit what those estimators are doing and why they deserve our trust.

Reference

Kennedy, Edward H. (2023), “Semiparametric doubly robust targeted double machine learning: a review.”

Williams, Nicholas T., Oliver J. Hines, and Kara E. Rudolph (2026), “Riesz representers for the rest of us.”

Chernozhukov, Victor, Whitney K. Newey, Victor Quintas-Martinez, and Vasilis Syrgkanis (2024), “Automatic debiased machine learning via riesz regression.”

Chernozhukov, Victor, Whitney K. Newey, and Rahul Singh (2022), “Automatic Debiased Machine Learning of Causal and Structural Effects,” Econometrica, 90 (3), 967–1027.

-

The Distributional Taylor Expansion is essentially the standard Taylor expansion applied at the distribution scale rather than the real-number scale. It is exactly equivalent to the concept of pathwise differentiability — both formalize the idea of taking a derivative of a statistical functional with respect to perturbations of the underlying distribution. ↩︎