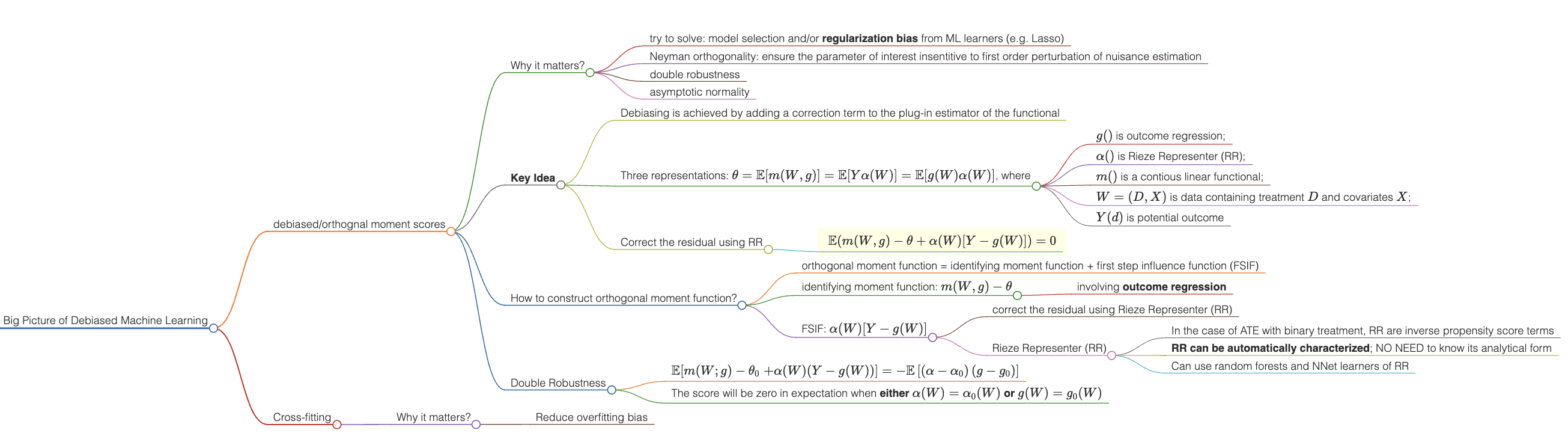

Big Picture of Debiased Machine Learning

Debiased machine learning (DML) is a generic recipe. The idea behind it is adding a correction term to the plug-in estimator of the functional, which leads to properties such as semi-parametric efficiency, double robustness, and Neyman orthogonality.

(Auto)-DML is a Method-of-Moments estimator

-

debiased/orthognal moment scores

-

Why it matters?

- try to solve: model selection and/or regularization bias from ML learners (e.g. Lasso)

- Neyman orthogonality: ensure the parameter of interest insentitive to first order perturbation of nuisance estimation

- double robustness

- asymptotic normality

-

Key Idea: Debiasing is achieved by adding a correction term to the plug-in estimator of the functional

-

Three representations: $\theta = \mathbb{E}[m(W,g)] = \mathbb{E}[Y\alpha(W)] = \mathbb{E}[g(W)\alpha(W)]$, where

- $g()$ is outcome regression;

- $\alpha()$ is Rieze Representer (RR);

- $m()$ is a contious linear functional;

- $W = (D, X)$ is data containing treatment $D$ and covariates $X$;

- $Y(d)$ is potential outcome

-

Correct the residual using RR

- $\mathbb{E}\{m(W,g) - \theta + \alpha(W)[Y-g(W)]\} = 0$

-

-

How to construct orthogonal moment function?

-

orthogonal moment function = identifying moment function + first step influence function (FSIF)

-

identifying moment function: $m(W,g) - \theta$

- involving outcome regression

-

FSIF: $\alpha(W)[Y-g(W)]$

- correct the residual using Rieze Representer (RR)

- Rieze Representer (RR)

- In the case of ATE with binary treatment, RR are inverse propensity score terms

- RR can be automatically characterized; NO NEED to know its analytical form

- Can use random forests and NNet learners of RR

-

-

Double Robustness

-

$\mathbb{E}[m(W ; g) -\theta_0 \left.+\alpha(W)(Y-g(W))\right] =-\mathbb{E}\left[\left(\alpha-\alpha_0\right)\left(g-g_0\right)\right]$

-

The score will be zero in expectation when either $\alpha(W) = \alpha_0(W)$ or $g(W) = g_0(W)$

-

-

-

Cross-fitting

- Why it matters?

- Reduce overfitting bias

- Why it matters?